JULY 2024

should ai support for architectural design show its reasoning?

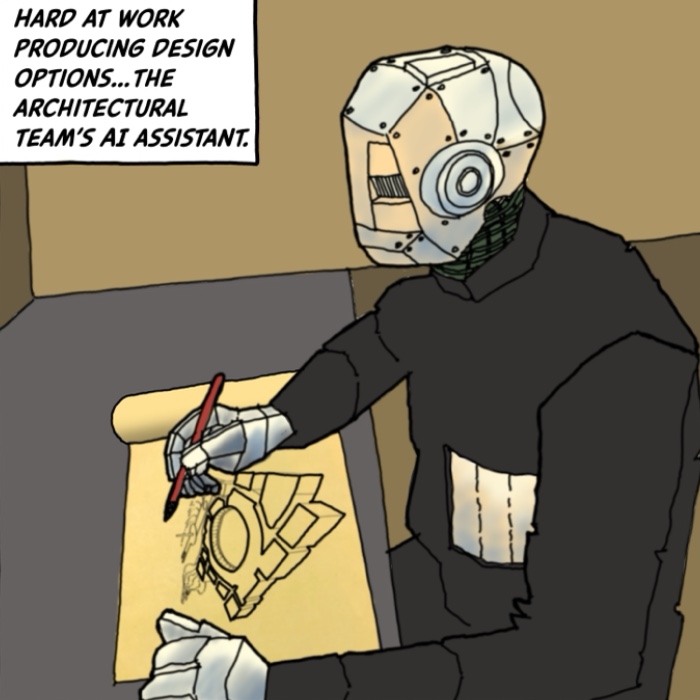

Imagine in the near future you and your team are working hard on an architectural design project. You are supported by AI which is advanced enough to produce option after option of the design, and those schemes are pretty good.

You now need to premiate an option. It is the moment of choice that gives direction to the project. But understanding how we arrive at a design decision can be as important as the design outcome itself so that the best decision can be made at our moment of choice.

Your AI assistant is providing you with designs, but not the ideas behind them. It is showing what the design is, but not why. To properly understand what you are seeing, and the inchoate latent potentials, will need more knowledge.

Back when the development of design options was done by humans we expected that each scheme could be explained. Not only that, we could review those explanations. We could ask "Why is the entry located there?" and "How does the design respond to the urban morphology of the locale?" Not only could we see "what it is" but through interaction we could understand "why it is what it is".

By comparison the AI algorithm which is producing the options is opaque and can't be explored.

How do we choose a design from a bunch of faits accomplis? We could ask the AI program to nominate the optimal design, and simply accept that choice without question or understanding. Are we willing to do that? Or instead should we require the AI to provide explanations for each important design move? Will we need to arrange for people or computer programs to explore each of the designs to provide an explanation?